In Industry 4.0 environments, the ultimate competitive advantage does not come from mechanical automation alone, but from how effectively a factory translates raw machine signals into actionable business insights. Many manufacturers invest heavily in high-speed equipment, only to realize that without a structured IoT data pipeline in packaging systems, their advanced machines behave like isolated, fragmented assets rather than a coordinated production network.

Installing sensors on a case packer or palletizer is the easy part. The real engineering challenge—and the key to unlocking true operational efficiency—lies in building a scalable, unified data architecture that seamlessly bridges the gap between the factory floor and the boardroom.

Table of Contents

- The Core Challenge: Overcoming Data Fragmentation

- The Four Layers of an Industrial Data Pipeline

- Achieving Total Operational Transparency

- Hard Data: The Impact of Packaging Line Data Integration

- Case Study: Evolving into a Closed-Loop Intelligent System

- The Broader Shift in Factory Design

- Digitize Your Packaging Floor with Joyda Totalpack

1. The Core Challenge: Overcoming Data Fragmentation

In real-world packaging environments, the biggest bottleneck is rarely hardware performance; it is data fragmentation. When case erectors, labelers, and palletizers operate independently with minimal data exchange, production reports must be generated manually after shifts conclude. Under this legacy model, downtime causes remain unclear, and establishing a unified KPI system for Overall Equipment Effectiveness (OEE) is practically impossible.

Packaging lines generate an immense volume of high-frequency data. Without a structured pipeline to capture, filter, and route this information, the data simply vanishes into the ether. A robust industrial data pipeline prevents these bottlenecks by ensuring that every machine node communicates fluently, transforming the packaging line from a series of independent mechanical execution systems into a unified, data-driven operational platform.

2. The Four Layers of an Industrial Data Pipeline

A complete, highly functional data pipeline is built upon a distinct, four-layer architecture that guarantees data quality, low latency, and decision responsiveness.

- Data Acquisition (Sensors & PLCs): The foundation of the pipeline. Machines continuously generate raw electrical signals, monitoring cycle times, servo temperatures, vibration frequencies, and specific fault statuses.

- Edge Layer (Industrial Gateways): Raw data is collected, filtered, and pre-processed at the machine level to ensure network stability and reduce transmission load. At this second tier of the data architecture, manufacturers must navigate the balance of edge vs cloud computing in Industry 4.0 packaging. Edge computing handles high-frequency data filtering locally at the machine level to ensure immediate, low-latency mechanical responses, while cloud integration aggregates and stores structured data for long-term predictive analytics across the entire enterprise.

- Data Transmission & Storage (Cloud / Server / MES): The pre-processed, high-frequency machine data is transmitted securely and stored in structured databases, making it ready for complex enterprise analysis.

- Application Layer (Visualization & Decision-Making): The final layer where raw data becomes insight. Centralized SCADA systems, ERP integrations, and live dashboards convert data into trackable KPIs such as OEE, downtime duration, and throughput.

3. Achieving Total Operational Transparency

A complete IoT pipeline converts raw sensor signals into analytics, predictive models, and automated decisions, forming the digital backbone of a smart factory.

To fully comprehend the shift from reactive to proactive management, it is crucial to understand how IoT enables real-time visibility in packaging lines. By continuously transmitting operational signals to central dashboards, plant managers can identify bottlenecks instantly and eliminate blind spots, effectively replacing delayed, manual reporting cycles with a continuous, streaming data environment. This real-time monitoring directly improves process control, drastically reduces material waste, and guarantees absolute traceability across the entire supply chain.

4. Hard Data: The Impact of Packaging Line Data Integration

Industry evidence shows that structured IoT data pipelines significantly improve packaging performance. When a factory shifts its focus from isolated machine speed to holistic data responsiveness, the operational and financial gains are profound.

Smart Factory Data Architecture Impact

| Operational Metric | Without Unified Data Pipeline | With IoT Data Pipeline Integration | Strategic Impact |

| Data Collection | Manual, end-of-shift clipboard reporting | Continuous, real-time streaming | Eliminates human error and data latency. |

| Downtime Identification | Reactive (Investigated post-failure) | Immediate & Predictive | Reduces unplanned downtime and protects margins. |

| KPI Tracking (OEE) | Fragmented across different machines | Unified live dashboard tracking | Standardizes performance metrics across all lines. |

| Traceability | Batch-level documentation | Continuous tracking flow | Ensures full supply chain transparency and compliance. |

| System Scalability | Prone to network bottlenecks | High-volume edge filtering | Future-proofs the smart factory data architecture. |

5. Case Study: Evolving into a Closed-Loop Intelligent System

A recent Industry 4.0 packaging system upgrade for a consumer goods manufacturer clearly illustrates how a data pipeline creates tangible value.

Before Integration:

The facility’s machines operated independently. Production reports were compiled manually hours after the shift ended, meaning downtime causes were identified far too late to salvage daily quotas. They lacked any unified KPI system to track efficiency or quality loss.

After Implementing a Full Data Pipeline:

We deployed a system where sensors capture real-time machine signals across the entire line. Edge devices standardize and filter this data before securely transmitting it. Central systems (MES/ERP) aggregate the production data, while live dashboards visualize performance across every station.

Operational Results:

The impact was immediate. Management could instantly identify bottlenecks and abnormal behavior, transitioning the facility from reactive repairs to predictive maintenance. Standardized KPI tracking allowed for the data-driven optimization of throughput, labor allocation, and energy usage. Most importantly, the packaging system evolved into a closed-loop intelligent network where data continuously feeds optimization decisions.

6. The Broader Shift in Factory Design

As highlighted in modern IoT architecture, the real value lies not in data collection itself, but in the pipeline that turns raw signals into automated, intelligent actions.

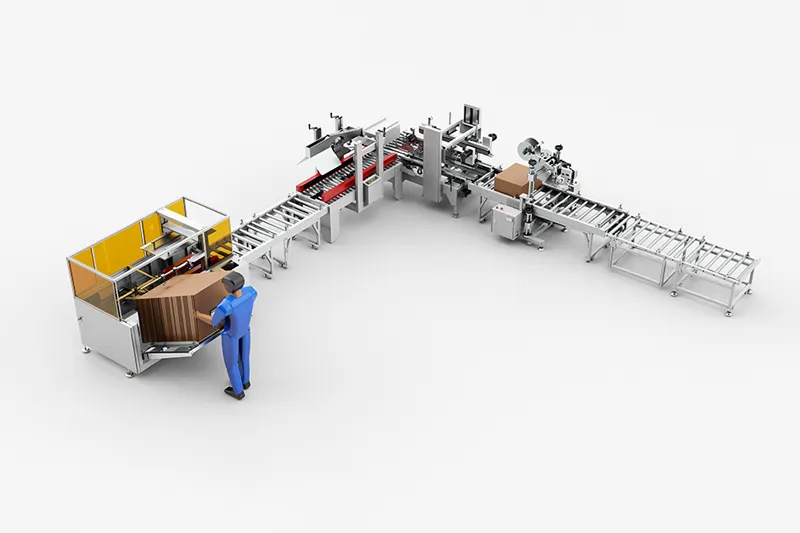

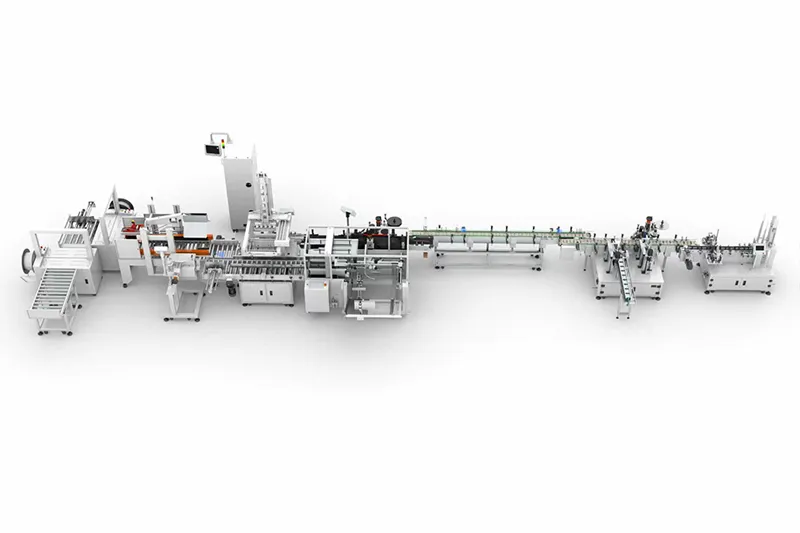

When examining the macro-level impact of these connected data networks, we can clearly see how Industry 4.0 transforms end-of-line packaging architecture. It fundamentally redefines the physical layout and operational strategy of the factory floor, evolving the packaging line from a mere cost center into a controllable, scalable, and highly responsive production asset integrated directly with enterprise logistics.

7. Digitize Your Packaging Floor with Joyda Totalpack

Building a resilient IoT data flow manufacturing network requires deep engineering expertise bridging mechanical hardware and advanced software. The most successful factories are those that treat data flow with the same level of importance as product flow.

At Joyda Totalpack, we design and deploy intelligent, data-driven packaging architectures that eliminate bottlenecks and unify your factory’s production data. By integrating robust sensors, edge computing, and seamless MES pipelines, we ensure your facility operates at peak efficiency. Contact our integration specialists today to learn how a structured data pipeline can transform your end-of-line packaging from a blind spot into a strategic advantage.

Frequently Asked Questions (FAQ)

1. What exactly is an IoT data pipeline?

An IoT data pipeline is the automated digital infrastructure that collects raw data from machine sensors, processes and filters it at the edge, securely transmits it to a central database or cloud, and visualizes it on dashboards so management can make informed decisions.

2. Why can’t we just connect our machines directly to the cloud without an “Edge Layer”?

Packaging lines generate an immense volume of high-frequency data (thousands of data points per second). Sending all raw data directly to the cloud causes severe network bandwidth bottlenecks and latency. The Edge Layer filters out the “noise” and only sends structured, relevant data to the cloud.

3. Will implementing a data pipeline disrupt our current production?

A well-planned integration minimizes disruption. Edge gateways and sensors can often be installed and configured in parallel with ongoing operations. The final network switchover and MES integration are typically scheduled during routine maintenance windows.

4. How does a data pipeline improve Overall Equipment Effectiveness (OEE)?

It provides 100% accurate, real-time measurements of Availability, Performance, and Quality. Instead of guessing why OEE is low, the pipeline pinpoints the exact machine and exact fault code causing the micro-stops, allowing engineers to permanently fix the root cause.

5. Is our machine data secure when transmitted through this pipeline?

Yes. Modern industrial data pipelines utilize advanced encryption protocols, secure VPN tunneling, and strict firewall configurations at the edge gateway to ensure that production data cannot be intercepted or manipulated by external threats.